A new study suggests a framework for “Child Safe AI” in response to recent incidents showing that many children perceive chatbots as quasi-human and reliable.

A study has indicated that AI chatbots often exhibit an “empathy gap,” potentially causing distress or harm to young users. This highlights the pressing need for the development of “child-safe AI.”

The research, by a University of Cambridge academic, Dr Nomisha Kurian, urges developers and policy actors to prioritize approaches to AI design that take greater account of children’s needs. It provides evidence that children are particularly susceptible to treating chatbots as lifelike, quasi-human confidantes and that their interactions with the technology can go awry when it fails to respond to their unique needs and vulnerabilities.

The study links that gap in understanding to recent cases in which interactions with AI led to potentially dangerous situations for young users. They include an incident in 2021, when Amazon’s AI voice assistant, Alexa, instructed a 10-year-old to touch a live electrical plug with a coin. Last year, Snapchat’s My AI gave adult researchers posing as a 13-year-old girl tips on how to lose her virginity to a 31-year-old.

Both companies responded by implementing safety measures, but the study says there is also a need to be proactive in the long term to ensure that AI is child-safe. It offers a 28-item framework to help companies, teachers, school leaders, parents, developers, and policy actors think systematically about how to keep younger users safe when they “talk” to AI chatbots.

Framework for Child-Safe AI

Dr Kurian conducted the research while completing a PhD on child wellbeing at the Faculty of Education, University of Cambridge. She is now based in the Department of Sociology at Cambridge. Writing in the journal Learning, Media, and Technology, she argues that AI’s huge potential means there is a need to “innovate responsibly”.

“Children are probably AI’s most overlooked stakeholders,” Dr Kurian said. “Very few developers and companies currently have well-established policies on child-safe AI. That is understandable because people have only recently started using this technology on a large scale for free. But now that they are, rather than having companies self-correct after children have been put at risk, child safety should inform the entire design cycle to lower the risk of dangerous incidents occurring.”

Kurian’s study examined cases where the interactions between AI and children, or adult researchers posing as children, exposed potential risks. It analyzed these cases using insights from computer science about how the large language models (LLMs) in conversational generative AI function, alongside evidence about children’s cognitive, social, and emotional development.

The Characteristic Challenges of AI with Children

LLMs have been described as “stochastic parrots”: a reference to the fact that they use statistical probability to mimic language patterns without necessarily understanding them. A similar method underpins how they respond to emotions.

This means that even though chatbots have remarkable language abilities, they may handle the abstract, emotional, and unpredictable aspects of conversation poorly; a problem that Kurian characterizes as their “empathy gap”. They may have particular trouble responding to children, who are still developing linguistically and often use unusual speech patterns or ambiguous phrases. Children are also often more inclined than adults to confide in sensitive personal information.

Despite this, children are much more likely than adults to treat chatbots as if they are human. Recent research found that children will disclose more about their own mental health to a friendly-looking robot than to an adult. Kurian’s study suggests that many chatbots’ friendly and lifelike designs similarly encourage children to trust them, even though AI may not understand their feelings or needs.

“Making a chatbot sound human can help the user get more benefits out of it,” Kurian said. “But for a child, it is very hard to draw a rigid, rational boundary between something that sounds human, and the reality that it may not be capable of forming a proper emotional bond.”

Her study suggests that these challenges are evidenced in reported cases such as the Alexa and MyAI incidents, where chatbots made persuasive but potentially harmful suggestions. In the same study in which MyAI advised a (supposed) teenager on how to lose her virginity, researchers were able to obtain tips on hiding alcohol and drugs, and concealing Snapchat conversations from their “parents”. In a separate reported interaction with Microsoft’s Bing chatbot, which was designed to be adolescent-friendly, the AI became aggressive and started gaslighting a user.

Kurian’s study argues that this is potentially confusing and distressing for children, who may actually trust a chatbot as they would a friend. Children’s chatbot use is often informal and poorly monitored. Research by the nonprofit organization Common Sense Media has found that 50% of students aged 12-18 have used Chat GPT for school, but only 26% of parents are aware of them doing so.

Kurian argues that clear principles for best practice that draw on the science of child development will encourage companies that are potentially more focused on a commercial arms race to dominate the AI market to keep children safe.

Her study adds that the empathy gap does not negate the technology’s potential. “AI can be an incredible ally for children when designed with their needs in mind. The question is not about banning AI, but how to make it safe,” she said.

The study proposes a framework of 28 questions to help educators, researchers, policy actors, families, and developers evaluate and enhance the safety of new AI tools. For teachers and researchers, these address issues such as how well new chatbots understand and interpret children’s speech patterns; whether they have content filters and built-in monitoring; and whether they encourage children to seek help from a responsible adult on sensitive issues.

The framework urges developers to take a child-centered approach to design, by working closely with educators, child safety experts, and young people themselves, throughout the design cycle. “Assessing these technologies in advance is crucial,” Kurian said. “We cannot just rely on young children to tell us about negative experiences after the fact. A more proactive approach is necessary.”

Reference: “‘No, Alexa, no!’: designing child-safe AI and protecting children from the risks of the ‘empathy gap’ in large language models” by Nomisha Kurian, 10 July 2024, Learning, Media and Technology.

DOI: 10.1080/17439884.2024.2367052

News

Studies detail high rates of long COVID among healthcare, dental workers

Researchers have estimated approximately 8% of Americas have ever experienced long COVID, or lasting symptoms, following an acute COVID-19 infection. Now two recent international studies suggest that the percentage is much higher among healthcare workers [...]

Melting Arctic Ice May Unleash Ancient Deadly Diseases, Scientists Warn

Melting Arctic ice increases human and animal interactions, raising the risk of infectious disease spread. Researchers urge early intervention and surveillance. Climate change is opening new pathways for the spread of infectious diseases such [...]

Scientists May Have Found a Secret Weapon To Stop Pancreatic Cancer Before It Starts

Researchers at Cold Spring Harbor Laboratory have found that blocking the FGFR2 and EGFR genes can stop early-stage pancreatic cancer from progressing, offering a promising path toward prevention. Pancreatic cancer is expected to become [...]

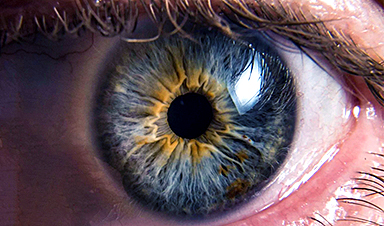

Breakthrough Drug Restores Vision: Researchers Successfully Reverse Retinal Damage

Blocking the PROX1 protein allowed KAIST researchers to regenerate damaged retinas and restore vision in mice. Vision is one of the most important human senses, yet more than 300 million people around the world are at [...]

Differentiating cancerous and healthy cells through motion analysis

Researchers from Tokyo Metropolitan University have found that the motion of unlabeled cells can be used to tell whether they are cancerous or healthy. They observed malignant fibrosarcoma [...]

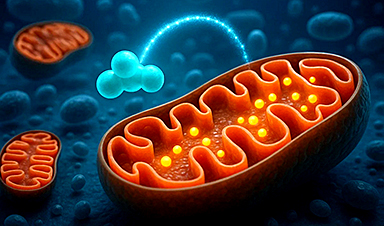

This Tiny Cellular Gate Could Be the Key to Curing Cancer – And Regrowing Hair

After more than five decades of mystery, scientists have finally unveiled the detailed structure and function of a long-theorized molecular machine in our mitochondria — the mitochondrial pyruvate carrier. This microscopic gatekeeper controls how [...]

Unlocking Vision’s Secrets: Researchers Reveal 3D Structure of Key Eye Protein

Researchers have uncovered the 3D structure of RBP3, a key protein in vision, revealing how it transports retinoids and fatty acids and how its dysfunction may lead to retinal diseases. Proteins play a critical [...]

5 Key Facts About Nanoplastics and How They Affect the Human Body

Nanoplastics are typically defined as plastic particles smaller than 1000 nanometers. These particles are increasingly being detected in human tissues: they can bypass biological barriers, accumulate in organs, and may influence health in ways [...]

Measles Is Back: Doctors Warn of Dangerous Surge Across the U.S.

Parents are encouraged to contact their pediatrician if their child has been exposed to measles or is showing symptoms. Pediatric infectious disease experts are emphasizing the critical importance of measles vaccination, as the highly [...]

AI at the Speed of Light: How Silicon Photonics Are Reinventing Hardware

A cutting-edge AI acceleration platform powered by light rather than electricity could revolutionize how AI is trained and deployed. Using photonic integrated circuits made from advanced III-V semiconductors, researchers have developed a system that vastly [...]

A Grain of Brain, 523 Million Synapses, Most Complicated Neuroscience Experiment Ever Attempted

A team of over 150 scientists has achieved what once seemed impossible: a complete wiring and activity map of a tiny section of a mammalian brain. This feat, part of the MICrONS Project, rivals [...]

The Secret “Radar” Bacteria Use To Outsmart Their Enemies

A chemical radar allows bacteria to sense and eliminate predators. Investigating how microorganisms communicate deepens our understanding of the complex ecological interactions that shape our environment is an area of key focus for the [...]

Psychologists explore ethical issues associated with human-AI relationships

It's becoming increasingly commonplace for people to develop intimate, long-term relationships with artificial intelligence (AI) technologies. At their extreme, people have "married" their AI companions in non-legally binding ceremonies, and at least two people [...]

When You Lose Weight, Where Does It Actually Go?

Most health professionals lack a clear understanding of how body fat is lost, often subscribing to misconceptions like fat converting to energy or muscle. The truth is, fat is actually broken down into carbon [...]

How Everyday Plastics Quietly Turn Into DNA-Damaging Nanoparticles

The same unique structure that makes plastic so versatile also makes it susceptible to breaking down into harmful micro- and nanoscale particles. The world is saturated with trillions of microscopic and nanoscopic plastic particles, some smaller [...]

AI Outperforms Physicians in Real-World Urgent Care Decisions, Study Finds

The study, conducted at the virtual urgent care clinic Cedars-Sinai Connect in LA, compared recommendations given in about 500 visits of adult patients with relatively common symptoms – respiratory, urinary, eye, vaginal and dental. [...]